AI Moves Fast. Trust Doesn’t.

Aligned Sovereign Intelligence Institute funds AI safety research and audits AI risk for enterprises and institutional capital.

The Challenge

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Center for AI Safety, 2023

This statement was signed by Geoffrey Hinton, Demis Hassabis, Ilya Sutskever, executives from Google, Microsoft, xAI, OpenAI, PhD researchers worldwide, and our entire team. This is why we created the ASI Grant Protocol.

Overview

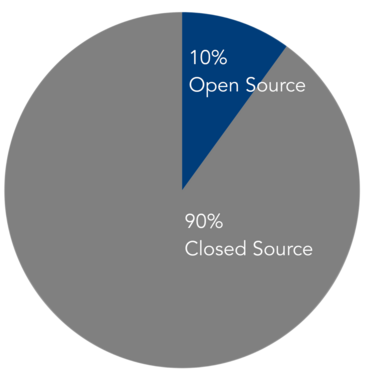

The global AI ecosystem will represent approximately $16.2 trillion by 2030, yet funding remains dangerously centralized. Closed source companies concentrated in the US have captured 90% of available funding (apx $1.35T)—creating a geographic and financial monopoly on AI development. Learn more about the Aligned Sovereign Intelligence Institute here.

Our Mission

Our mission is to close the $1.35T investment gap between closed-source and open-source AI research. To do that we created two DLT grant services for AI alignment research (one public, one private) and an AI risk platform for investor and corporate due diligence operators to support our grant mission. If you are a potential capital partner, please click here. For researchers and alignment partners, please connect with us on Linkedin here. Thank You.

ASI Signals

ASI Signals is the Consumer Reports of AI risk — the first independent platform that stress-tests AI models on actual production cost, safety, and reliability, built for investors and enterprises that can’t afford to be wrong. If you are interested in learning more, read the FAQ, and click here to request access.

Public Grants

We introduced a public peer funding service known as the Aligned Beacon Commons (ABC ), where peer review and funding happen transparently on-chain. Please read the FAQ, and click here to explore the app.

Private Grants

We are researchers funding AI alignment research with roots at Data Science Community (moderated) – an education hub with over 1.1M members on Linkedin. Click here to let us know you are interested in a grant.

ASI Research

We built one of the world’s first dedicated AI alignment databases for researchers to explore and learn from their peers for free. Explore thousands of papers from the largest research repositories on the Internet:

The ASI Benchmark uses global standards by major institutions around the world for alignment reporting via ISO/IEC 42001, NIST AI Risk Framework, the EU AI Act, alongside veteran AI safety research projects:

We developed a comprehensive, searchable glossary with 200+ AI alignment/safety terms over 20 categories featuring interactive citation analytics, academic references, and a modern, responsive interface:

Alignment Dilemma

This video explains the challenge

About ASI

Transparency

The ASI governance dashboards cement our commitment to transparency by showing you live information about ASI Protocol allocation, circulation, treasury, and much more.

Architecture

ASIP’s architecture offers efficiency and simplicity. It operates dual governance utility tokens that fund AI safety research via KPIs and delivers treasury predictability via hard assets.

Learn More

ASIP uses dual governance tokens and a treasury-focused one. It allocates 50% to funding research and is anti-arbitrage by design to focus on making performance off-chain meaningful on-chain.

KPI Funding

This article describes why legacy VC capital investment needs to evolve in the fast-paced, high-stakes AI era. ASIP is a step in that direction, where merit earns capital.

This video explains our approach